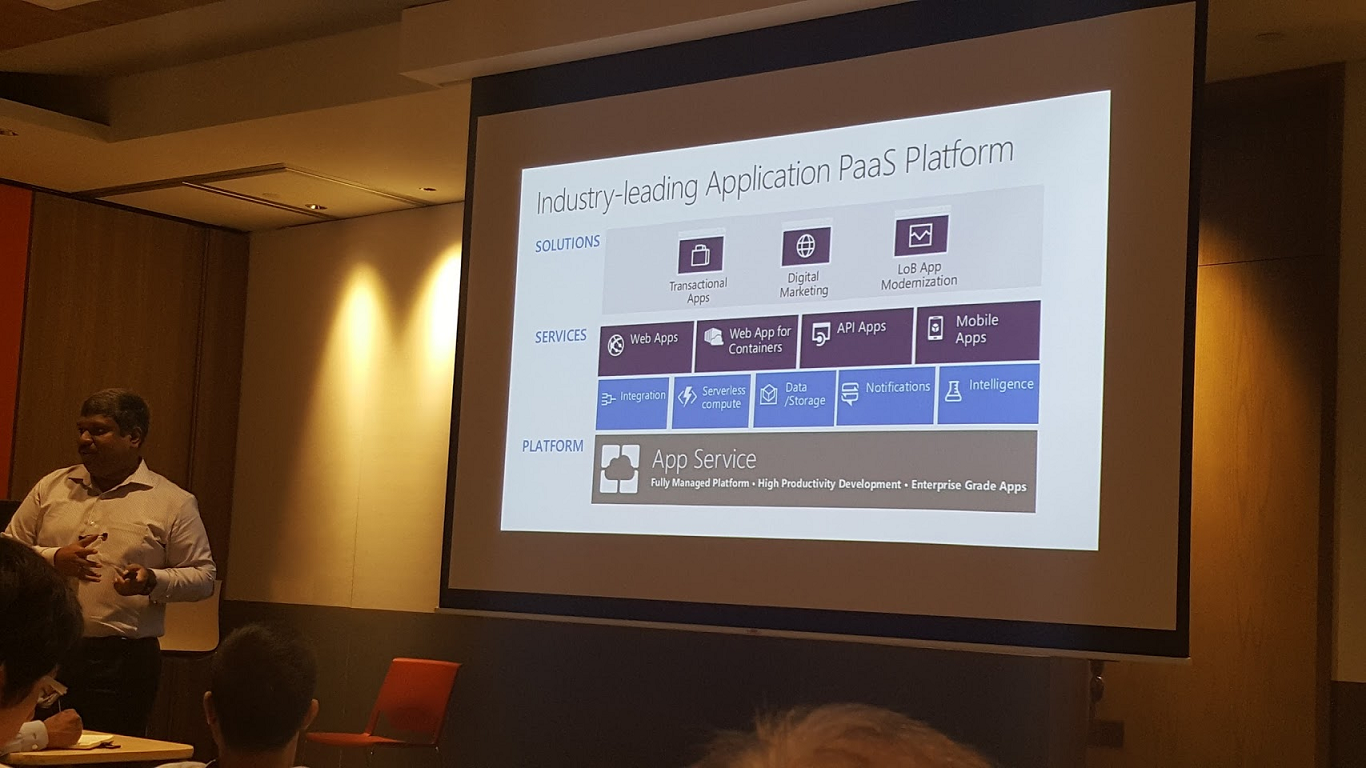

Few days ago, the Cloud & Enterprise team at Microsoft Singapore organized an event about developing modern applications on Microsoft Azure in their downtown office. As member from a young startup using Microsoft Azure services, I decided to join the event to find more about the tools that we have been using daily in our development journey. Hence, this article is to share what I had learnt in that 8-hour Azure event. [caption id=“attachment_media-36” align=“aligncenter” width=“1366”] Modern App Development with Azure event[/caption]

Modern App Development with Azure event[/caption]

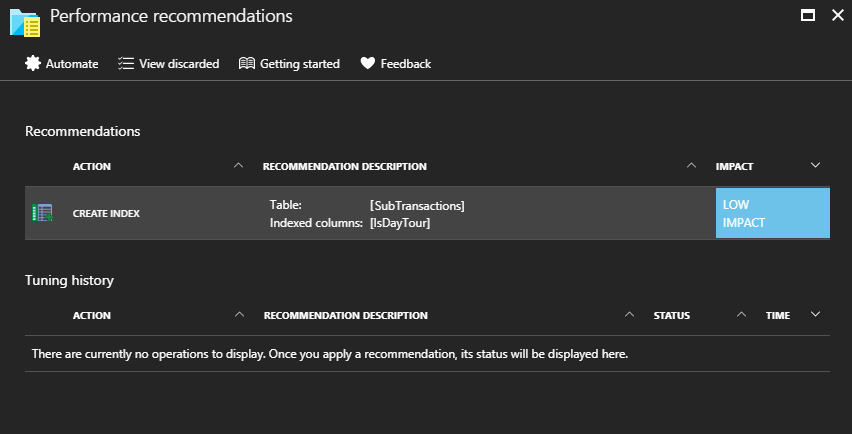

Azure Advisor Even though Microsoft Azure is what our team has been using for almost three years, throughout this event, we found out that there are still many new and useful tools that we are not aware of, for example, the Azure Advisor. Azure Advisor, a personalized recommendation guide, helps to analyze our usage and configuration in order to provide suggestions regarding the availability, security, performance, and cost of our Azure resources. [caption id=“attachment_5216” align=“aligncenter” width=“852”] Azure Advisor also enables us to find the top recommendations based on their potential impact, such as adding index to a table to improve the performance of Azure SQL databases.[/caption]

Azure Advisor also enables us to find the top recommendations based on their potential impact, such as adding index to a table to improve the performance of Azure SQL databases.[/caption]

DIPR and Web Application Firewall

Azure Advisor also integrates with Azure Security Center to offer security recommendations. Speaking of security, at break time, we also discussed two ways of securing our web apps on Azure. Although they’re not part of the event agenda, it’s still useful to highlight here. Firstly, by using the DIPR (Dynamic IP address Restriction), we can easily control the set of IP addresses and address ranges which are allowed or denied to access the web apps through web.config. Secondly, there is another tool highlighted during our break-time discussion is Application Gateway. There is a feature known as Web Application Firewall in Application Gateway which helps simplifying security management of the web applications.

Testing in Production

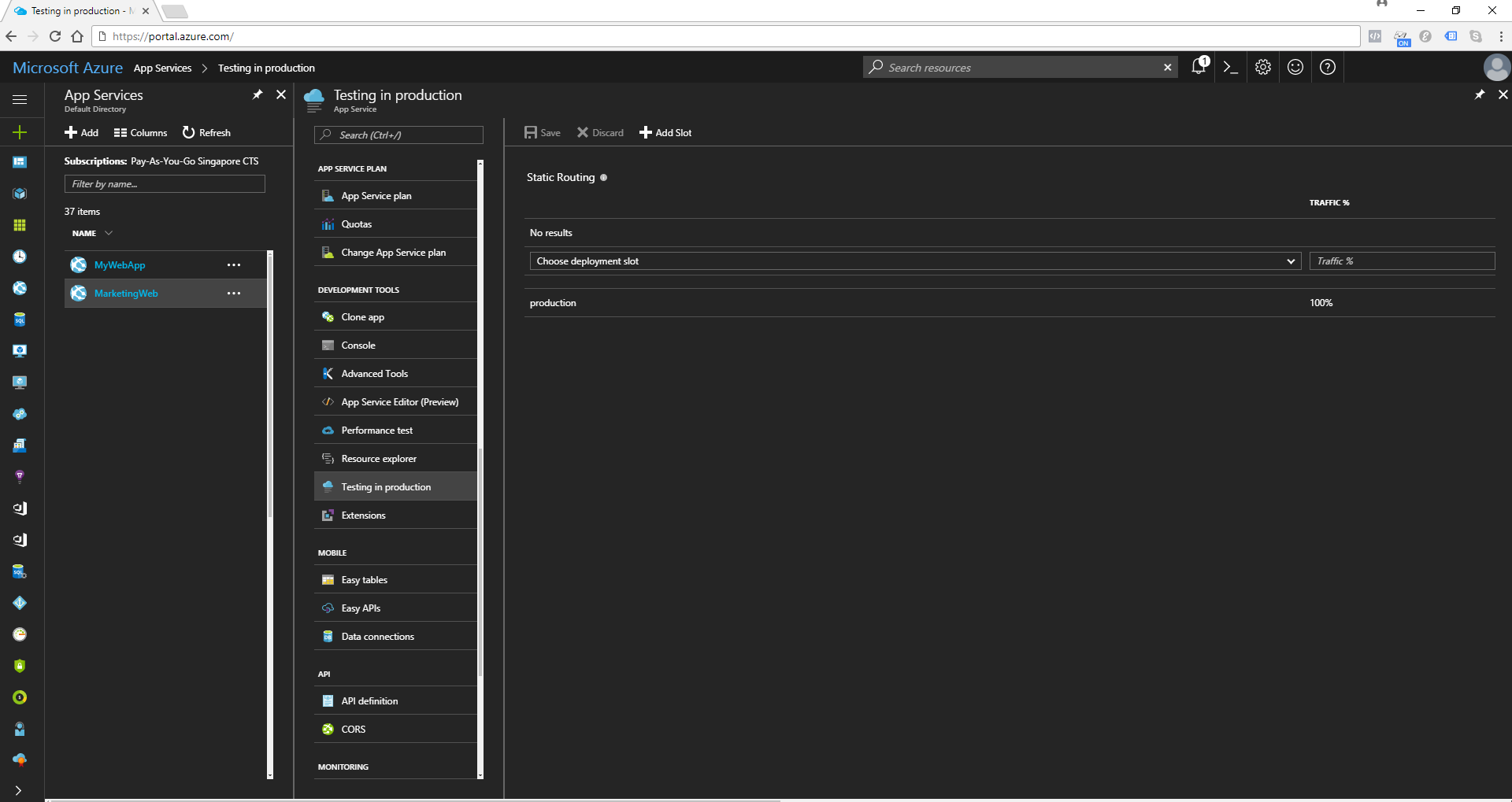

Working in startup environment, most of the time, when we want to find out the best online promotional and marketing strategies, we will do A/B Testing. In Microsoft Azure, there is feature known as Testing in Production (previously also known as Traffic Routing). To do A/B testing, normally we will prepare two or three versions of our website. After publishing the different versions into the different deployment slots under the same App Service, we will then choose “Testing in production” option in the settings blade our web app. [caption id=“attachment_5276” align=“aligncenter” width=“1920”] Testing in production option uses deployment slots so the web app must run in Standard or Premium tier.[/caption] Normally in a successful A/B test, users will be randomly presented with just one of the versions. Hence, the Testing in Production feature will randomly choose a version based on the percentages we configure, each user will remain on that version throughout his/her visit as long as the same ARR (Application Request Routing) Affinity cookie is presented.

Testing in production option uses deployment slots so the web app must run in Standard or Premium tier.[/caption] Normally in a successful A/B test, users will be randomly presented with just one of the versions. Hence, the Testing in Production feature will randomly choose a version based on the percentages we configure, each user will remain on that version throughout his/her visit as long as the same ARR (Application Request Routing) Affinity cookie is presented.

Performance Test

Besides understanding which version of our website suits the market best, we also need to make sure our web applications can handle high volume of visitors and usage. That’s where the Performance Test plays an important role. [caption id=“attachment_5288” align=“aligncenter” width=“1122”] Yay, we have only 1 failed request under the load test of 250 concurrent users over 5 minutes![/caption] The load testing stimulates the load on our web app over a specific time period and measures the response of our app, such as how fast our app responds to 250 concurrent users. Please take note that the load test should be done in a non-production environment so that customers using our production app won’t be affected.

Yay, we have only 1 failed request under the load test of 250 concurrent users over 5 minutes![/caption] The load testing stimulates the load on our web app over a specific time period and measures the response of our app, such as how fast our app responds to 250 concurrent users. Please take note that the load test should be done in a non-production environment so that customers using our production app won’t be affected.

Elastic Database Pool

In our current startup, we have at least one database for each of our overseas market. In order to accommodate unpredictable period of usage in each different market, we decided to switch to use Elastic Database Pool. In the past, we will either over-provision or under-provision our database resources. Doing either one of them will cause us to waste money or sacrifice the performance of our app. Now, with the help from Elastic Database Pool, we can have the unused DTUs to be shared across multiple databases, and so reduces the DTUs needed and overall cost. [caption id=“attachment_5311” align=“aligncenter” width=“1869”] Different databases in the same pool with different peak DTU.[/caption]

Different databases in the same pool with different peak DTU.[/caption]

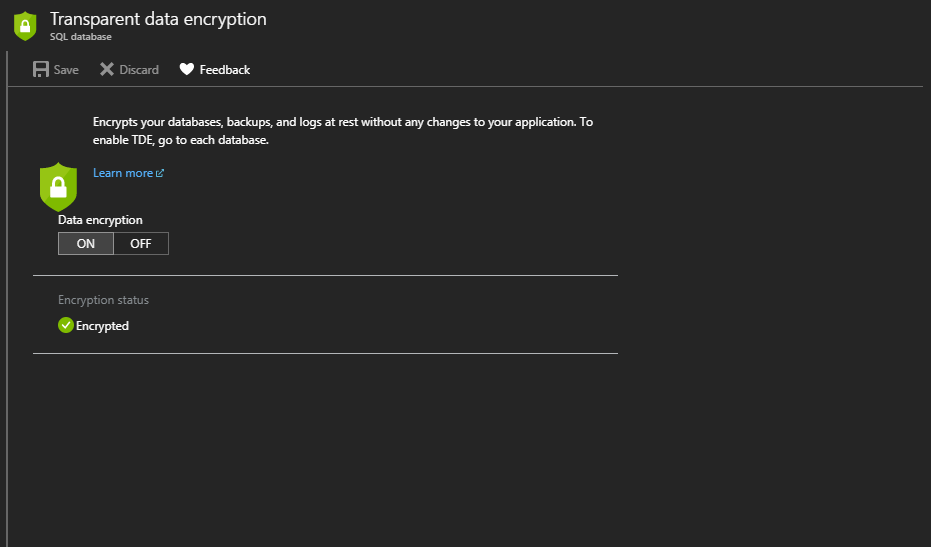

Transparent Data Encryption (TDE)

During the discussion of security, the term TDE was mentioned. TDE in Azure SQL also helps protect against the threat of malicious activity by performing real-time encryption and decryption of the database, associated backups, and transaction log files at rest without requiring changes to our app. The storage of the entire database is encrypted by using a symmetric key known as the Database Encryption Key which is protected by a built-in server certificate. One thing that we need to be aware of is that backups of databases with TDE enabled are also encrypted by using the Database Encryption Key. Hence, when we restore these backups, the certificate protecting the database encryption key must be available. [caption id=“attachment_5325” align=“aligncenter” width=“931”] Transparent Data Encryption (TDE) option in Azure SQL database.[/caption]

Transparent Data Encryption (TDE) option in Azure SQL database.[/caption]

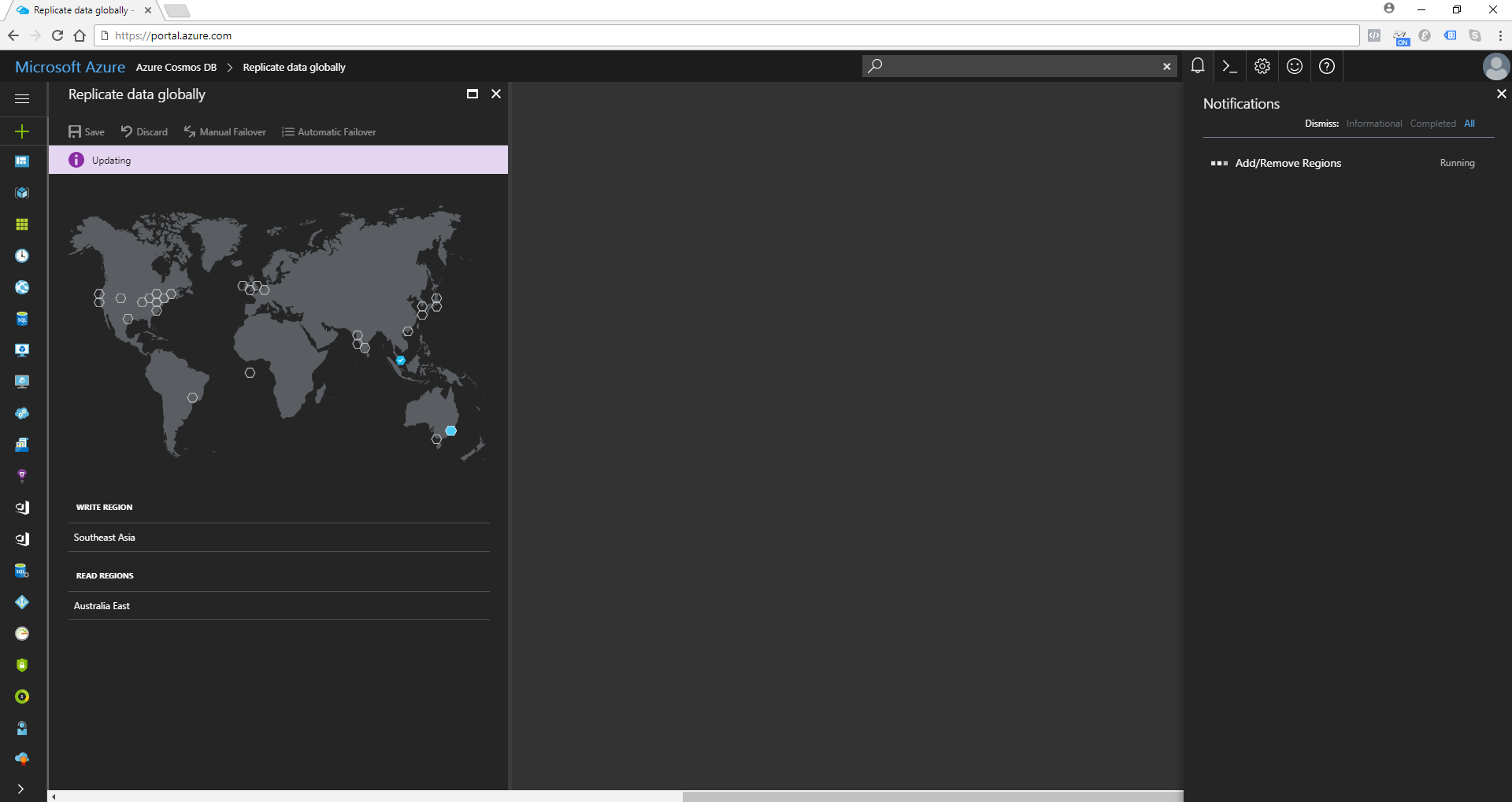

Cosmos DB and DocumentDB

Cosmos DB is a schema-free database system designed for scalable, broadly distributed, highly responsive, and highly available applications. It supports DocumentDB, one of the NoSQL APIs. Cosmos DB is available in all Azure regions by default. We can easily add a second region as Read Region simply by clicking on a location on the map. After that, we will be allowed to use the Automatic Failover function which enables Cosmos DB to perform automatic failover of the Write Region to one of the Read Region in the rare event of a data center outage. [caption id=“attachment_5478” align=“aligncenter” width=“1920”] Updating the Read Regions of an Cosmos DB (Time taken is about 5-10 minutes).[/caption] During the talk, we also discussed about how Cosmos DB index data. Cosmos DB is truly schema-agnostic*. Hence, by default, all data stored in Cosmos DB is indexed without developers dealing with schema and index management. However, Cosmos DB still allows us to specify a custom indexing policy for collections.

Updating the Read Regions of an Cosmos DB (Time taken is about 5-10 minutes).[/caption] During the talk, we also discussed about how Cosmos DB index data. Cosmos DB is truly schema-agnostic*. Hence, by default, all data stored in Cosmos DB is indexed without developers dealing with schema and index management. However, Cosmos DB still allows us to specify a custom indexing policy for collections.

A poorly crafted user interface may stay for years, but a database can haunt an enterprise for decades. – Paul Hoehne, Schema Agnosticism: What It Is and Why You Should Care, 2015

Working at a fast-paced startup, developers are expected to deliver the features and products fast. Hence, designing database properly is one of our priorities. We always try to choose schema-free option like Cosmos DB to avoid longer and more complicated development efforts given by relational database design. * Sometimes Microsoft claims the Cosmos DB to be schema-agnostic and also schema-free. Schema-agnostic and schema-free are not the same. Schema-agnostic databases are not bound by schemas but are aware of schemas. However, schema-free database is not bounded by any schema rules outside of the correct syntax.

Serverless computing can be described as follows.

Serverless computing can be described as follows.

- Servers are fully-abstracted: Developers do not have to worry about the infrastructure and servers provisioning;

- It supports triggers based on activity in a SaaS service so scaling is event-driven instead of resource-driven;

- Cost saving because the billing is based on resource consumption and execution, so we only pay for what we use.

During the talk, Logic Apps and Functions were introduced.

Logic Apps

Logic Apps provide a way to implement workflows easily and in an visual way. Hence, developers do not to need to worry about hosting and can easily automate the workflows they have in place without writing a single line of code. For example, Marketing team would like to know when there is a new product being uploaded to our partner’s e-commerce website, what we have is just building a Logic App as shown in the screenshot below. After that, our partner just need to send a POST request to the URL of our Logic App when they upload a new product to their system. [caption id=“attachment_5364” align=“aligncenter” width=“1920”] Using Logic Apps Designer to define a simple workflow.[/caption]

Using Logic Apps Designer to define a simple workflow.[/caption]

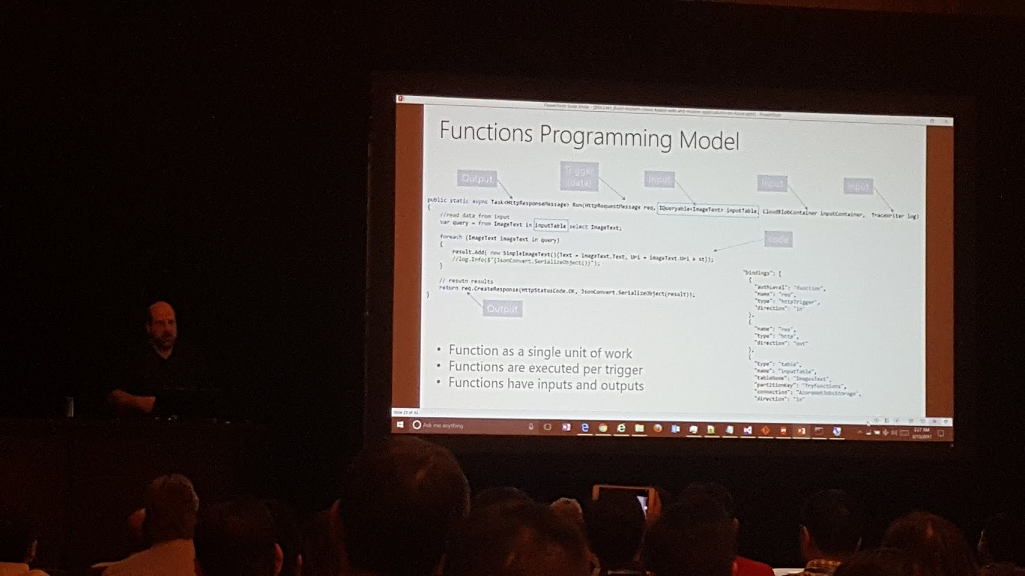

Azure Functions

Another serverless computing function is Azure Functions. Unlike Logic App which is a workflow triggered by an event, Function is code triggered by an event. Function allows developers to focus on the code for only the problem they want to solve without worrying about the infrastructure. [caption id=“attachment_media-4” align=“aligncenter” width=“1025”] Stefan Schackow, Principal Program Manager, talked about Azure Functions in Microsoft Tech Summit Singapore in March 2017[/caption] Azure Functions offer HTTP trigger which will execute the function in response to an HTTP request. Hence by using this feature, I once tried to serve a static web page, index.html, from an Azure Function, as shown in the C# code below.

Stefan Schackow, Principal Program Manager, talked about Azure Functions in Microsoft Tech Summit Singapore in March 2017[/caption] Azure Functions offer HTTP trigger which will execute the function in response to an HTTP request. Hence by using this feature, I once tried to serve a static web page, index.html, from an Azure Function, as shown in the C# code below.

using System.Net; using System.Net.Http.Headers; using System.Threading.Tasks; using System.IO;

public static async Task Run(HttpRequestMessage req, TraceWriter log) { var response = new HttpResponseMessage(HttpStatusCode.OK); var stream = new FileStream(@“d:\home\site\wwwroot\HttpTriggerCSharp1\index.html”, FileMode.Open);

response.Content = new StreamContent(stream);

response.Content.Headers.ContentType = new MediaTypeHeaderValue("text/html");

return response;

}

This is just a quick hack. For more advanced solution, please read the article Serving Static Files from Azure Functions written by Anthony Chu, Microsoft MVP.

Azure API Management

APIs have become engines of growth in today’s economy. Hence there is a need to manage APIs so that we can achieve the following goals.

- Package and publish APIs to external parties;

- Provide developers a self-service portal to get started with the APIs easily;

- Ramp-up developers with documentations, samples, and API console;

- Build an API facade for existing back-end services;

- Easily add new capabilities to the APIs, for example can provide response caching;

- Reliably secure the APIs from misuse and abuse by providing trial subscription and limit the number of transactions per minute;

- Have insights about the usage and health of the APIs.

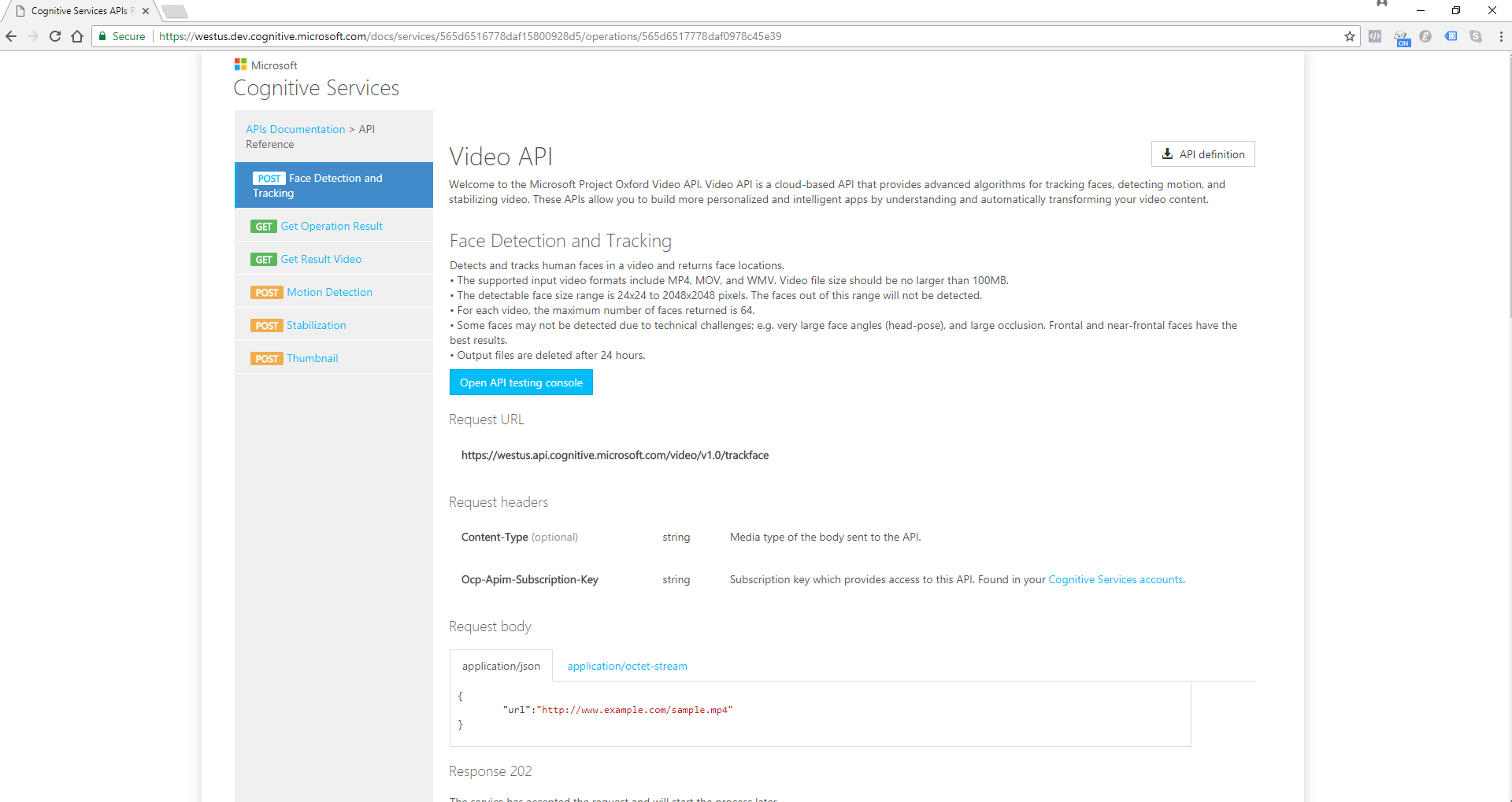

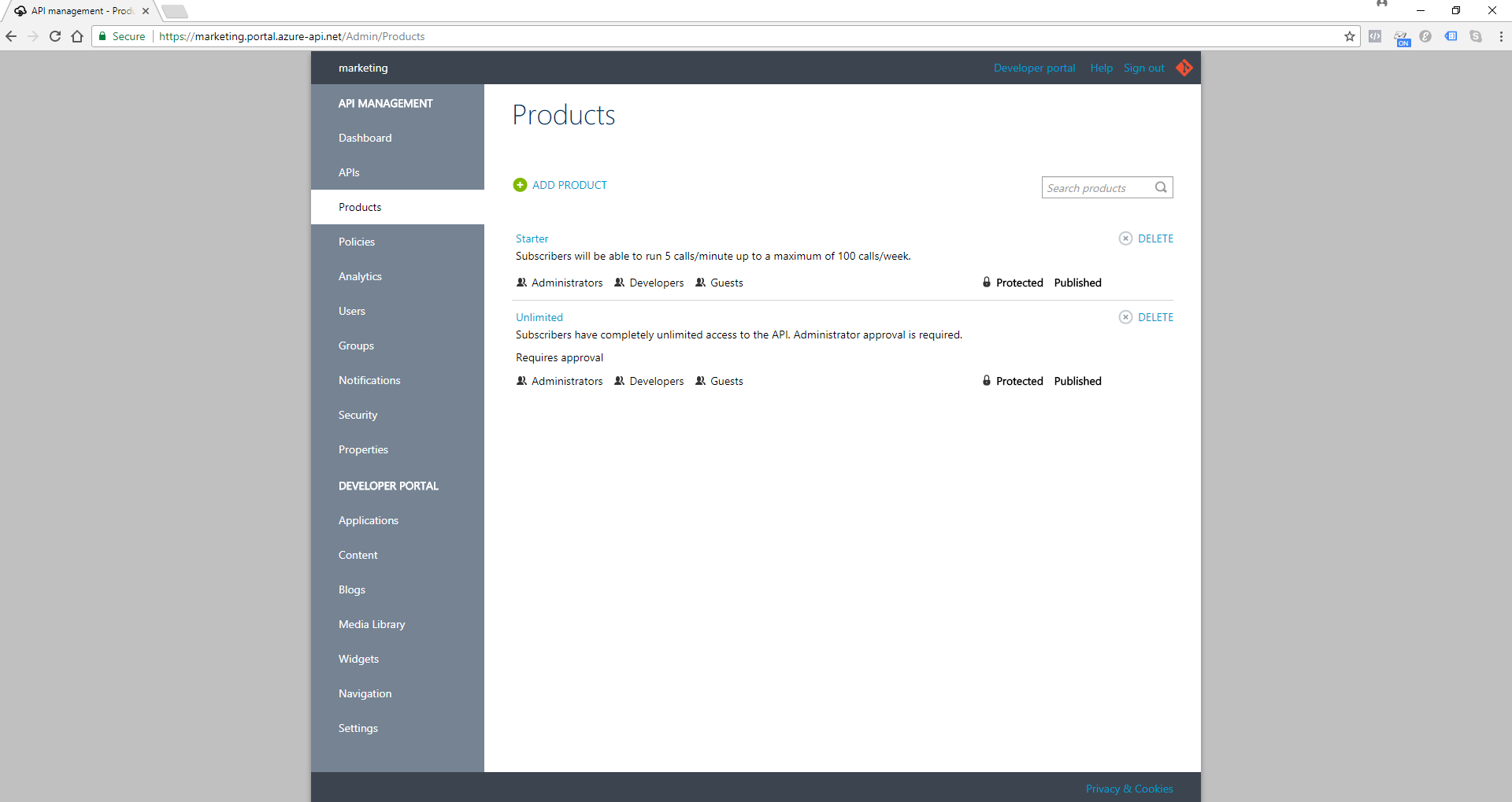

Azure API management is a proxy that sits between our back-end services and the calling clients. It is comprised of three building blocks: Developer Portal, Proxy, and Publisher Portal. [caption id=“attachment_5418” align=“aligncenter” width=“1920”] The Video API console from Microsoft Cognitive Services is also powered by Developer Portal in Azure API Management.[/caption] [caption id=“attachment_5424” align=“aligncenter” width=“1920”]

The Video API console from Microsoft Cognitive Services is also powered by Developer Portal in Azure API Management.[/caption] [caption id=“attachment_5424” align=“aligncenter” width=“1920”] Publisher Portal in Azure API Management allows developers to define their APIs such as how they will be exposed through products.[/caption]

Publisher Portal in Azure API Management allows developers to define their APIs such as how they will be exposed through products.[/caption]

One of the topics in the event is about Azure Media Service, an extensible cloud-based platform which enables developers to build scalable media management and delivery applications. What I love is the Analyze power of it in Azure Media Analytics. There are a few Media Analytics services available. I will only share two of the interesting features.

One of the topics in the event is about Azure Media Service, an extensible cloud-based platform which enables developers to build scalable media management and delivery applications. What I love is the Analyze power of it in Azure Media Analytics. There are a few Media Analytics services available. I will only share two of the interesting features.

Media Analytics: Video Summarization and Face Detection

Recently, the video thumbnail is available on YouTube. So, the viewers are now able to see a quick video clip which is the summary of the actual YouTube videos. Video summarization is in fact available on Azure Media Service as well. The Azure Media Video Thumbnails media processor enables us to create a summary of a video that is useful to customers who just want to preview a summary of a long video. For example, the following video clip is actually a 15-second summarization of the 2-minute-and-49-second Silverlight PV on YouTube. [video src=“https://gclanime.blob.core.windows.net/files/video-summarization-result.mp4" width=“1920” height=“1019” /] Face Detection is another interesting feature that I like. The face detection provides high precision face location detection and tracking that can detect up to 64 human faces in a video. For example, the following two images have faces in the YouTube video, ASEAN Spirit, highlighted in another program using the JSON result returned from the Azure Media Face Detector. [caption id=“attachment_5528” align=“aligncenter” width=“1280”] There are some people in this picture not detected by Azure Media Face Detector.[/caption] [caption id=“attachment_5529” align=“aligncenter” width=“1280”]

There are some people in this picture not detected by Azure Media Face Detector.[/caption] [caption id=“attachment_5529” align=“aligncenter” width=“1280”] Faces in noisy image can be detected by Azure Media Face Detector as well![/caption] Isn’t it cool to see visual intelligence like face detection works? In fact, there is now even a very easy way on Azure for us to customize our own computer vision models by uploading images and then tagging them properly. The tool is called Custom Vision Service, still in preview though.

Faces in noisy image can be detected by Azure Media Face Detector as well![/caption] Isn’t it cool to see visual intelligence like face detection works? In fact, there is now even a very easy way on Azure for us to customize our own computer vision models by uploading images and then tagging them properly. The tool is called Custom Vision Service, still in preview though.

Custom Vision Service

Firstly, we need to log in to the Custom Vision at https://www.customvision.ai/. Secondly, we create a new project and then upload the images as training images to the project. [caption id=“attachment_media-16” align=“aligncenter” width=“1920”] We need to have at least 2 tags and 5 images in each tag before we can start training our model.[/caption] Thirdly, we can just hit the “Quick Test” button to try out our little computer vision model. The following screenshots show some of the successful and failed cases. [caption id=“attachment_media-18” align=“aligncenter” width=“1920”]

We need to have at least 2 tags and 5 images in each tag before we can start training our model.[/caption] Thirdly, we can just hit the “Quick Test” button to try out our little computer vision model. The following screenshots show some of the successful and failed cases. [caption id=“attachment_media-18” align=“aligncenter” width=“1920”] [Success] With just 30 images, Computer Vision can know I bought a cake![/caption][caption id=“attachment_media-20” align=“aligncenter” width=“1920”]

[Success] With just 30 images, Computer Vision can know I bought a cake![/caption][caption id=“attachment_media-20” align=“aligncenter” width=“1920”] [Success] Hmm… It looks like a food menu (It also somewhat looks like a bread? Why?)[/caption][caption id=“attachment_media-22” align=“aligncenter” width=“1920”]

[Success] Hmm… It looks like a food menu (It also somewhat looks like a bread? Why?)[/caption][caption id=“attachment_media-22” align=“aligncenter” width=“1920”] [Failed] Ah, they are breads, not menu![/caption]From the examples above, we know that Custom Vision Service does “image classification” but not yet “object detection.” Hence, labeling our images correctly and having a large number of images as testing image are important to make the prediction works. Finally, Custom Vision Service also will generate a Prediction URL that we can send a POST request to to test new image programmatically.

[Failed] Ah, they are breads, not menu![/caption]From the examples above, we know that Custom Vision Service does “image classification” but not yet “object detection.” Hence, labeling our images correctly and having a large number of images as testing image are important to make the prediction works. Finally, Custom Vision Service also will generate a Prediction URL that we can send a POST request to to test new image programmatically.

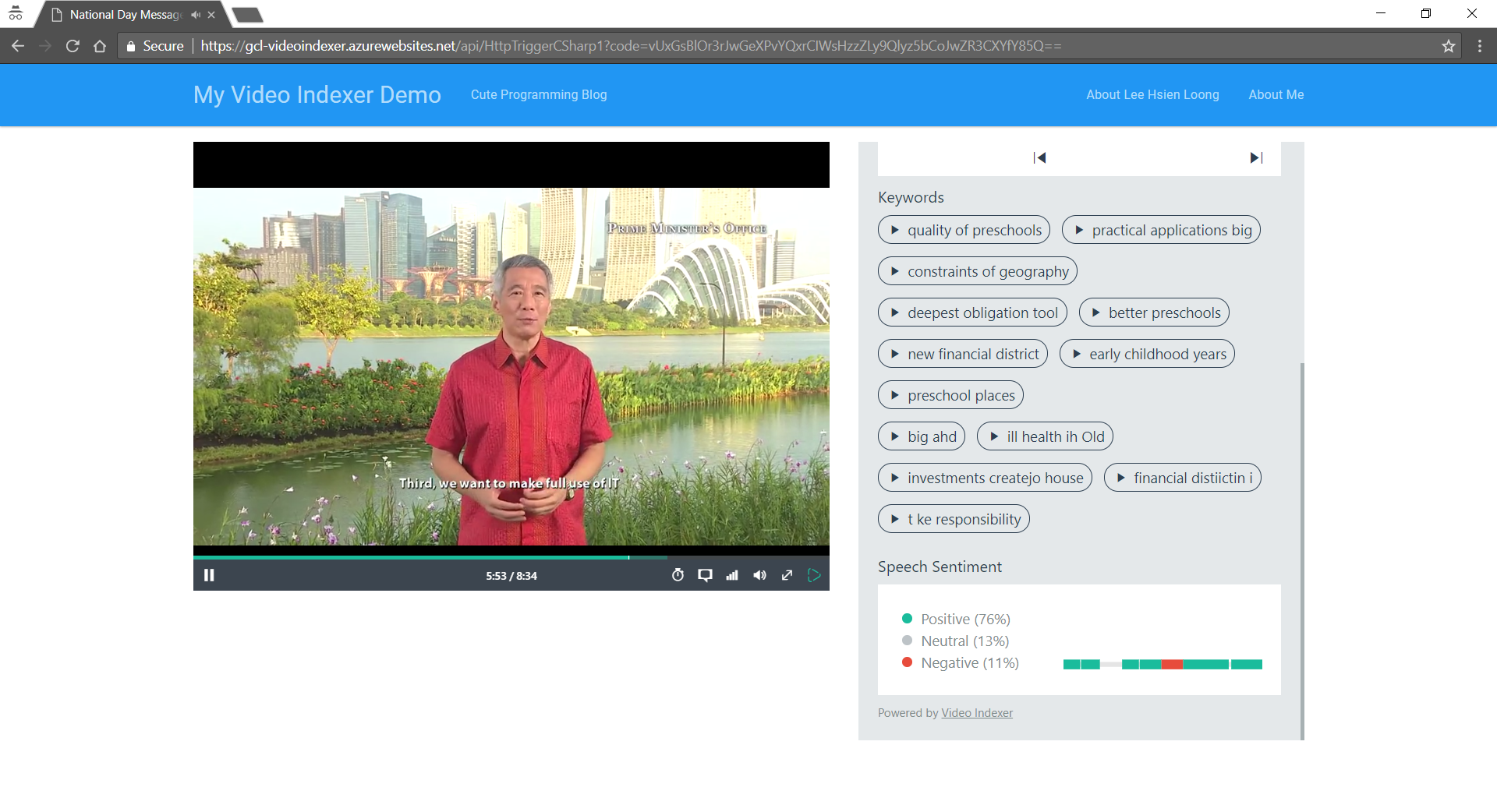

Video Indexer

Just when I thought Custom Vision Service was an exciting technology, my mind was blown away with the power of Video Indexer. With the help of media AI technologies, Video Indexer has the ability to easily extract video insights, such as speaker info, keywords, speech sentiment. In addition, it also provides transcript and translated versions of it for several languages such as English, Chinese, Japanese, and Filipino. When I was writing this article, it was Singapore National Day. So, I uploaded the National Day message delivered by Singapore Prime Minister to Video Indexer to analyze the video. Video Indexer is so powerful that it can detect the speaker in the video is Mr Lee Hsien Loong and it can provide the biography of the Prime Minister. [caption id=“attachment_media-5” align=“aligncenter” width=“1920”] PM Lee has a very positive National Day message to all fellow Singaporeans. (Link to Website) =)[/caption] Video Indexer currently allows us to embed two types of the widgets into our applications, i.e. the Player and the Cognitive Insights. The above screenshot shows both of the two widgets embedded in the same web page. However, currently there is no way to link both widget. The Player does not know, for example, whether any of the Keywords in the Cognitive Insights is pressed. You can try out my small example above at a simple web page hosted using Azure Function.

PM Lee has a very positive National Day message to all fellow Singaporeans. (Link to Website) =)[/caption] Video Indexer currently allows us to embed two types of the widgets into our applications, i.e. the Player and the Cognitive Insights. The above screenshot shows both of the two widgets embedded in the same web page. However, currently there is no way to link both widget. The Player does not know, for example, whether any of the Keywords in the Cognitive Insights is pressed. You can try out my small example above at a simple web page hosted using Azure Function.

DevOps, the union of people, processes, and products to enable continuous delivery of value to end users, is another highlight during the event. I have always taken an interest in DevOps and have been the “server person” in my two jobs. I’m thus always interested to see how can modern tools are able to make my deployment processes better. During the event, when we discussed about DevOps, two concepts were highlighted.

Resource Group, Azure Resource Manager (ARM) and Infrastructure as Code (IaC)

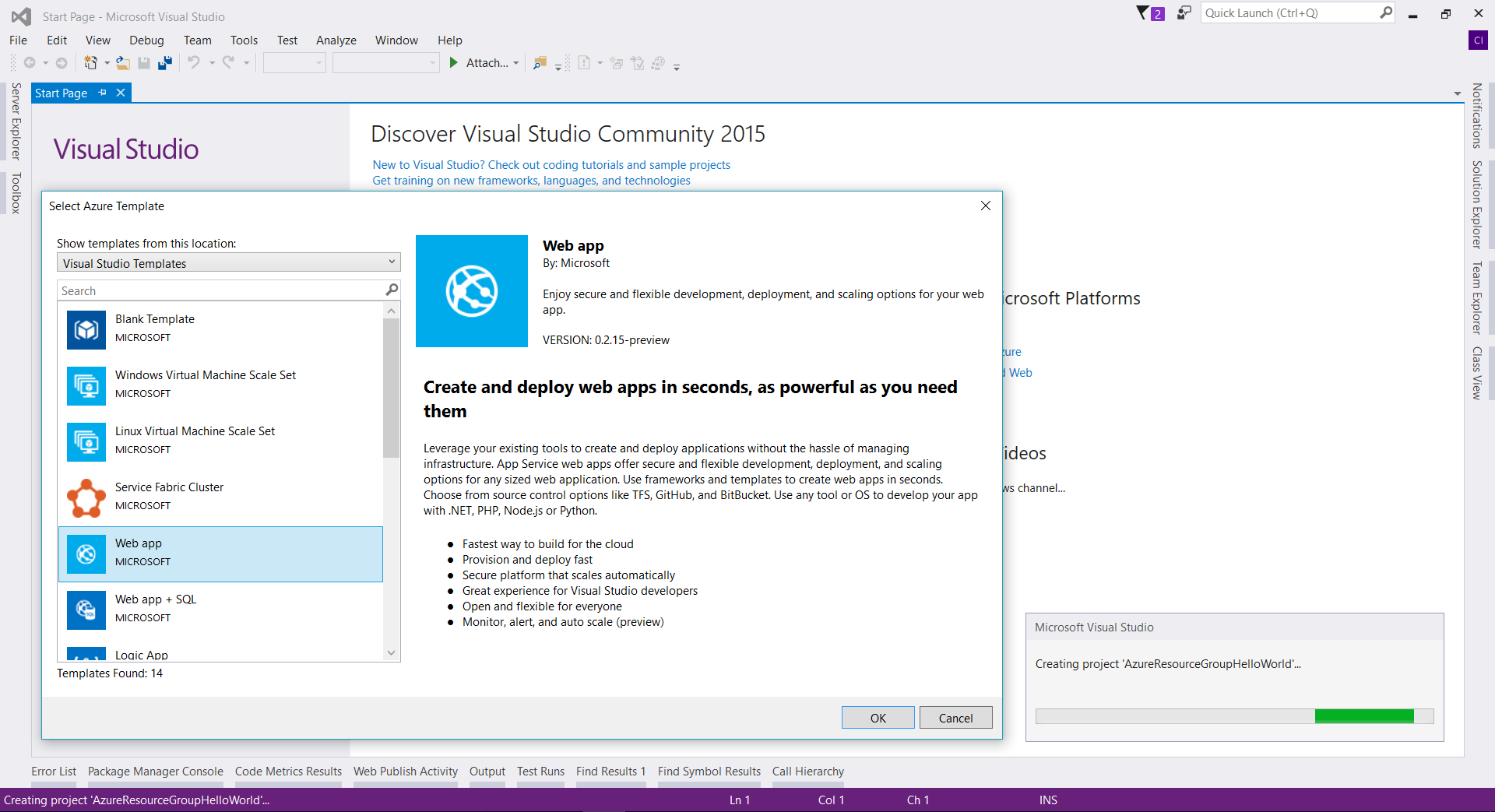

The first one is known as Resource Group. Resource Group should be a term that many Azure users are very familiar with. Resource Group is logical container that allows us to group individual resources, such as web apps, databases, and storage accounts together so that we can manage them together in terms of, for example, user access control and billing. Hence, all the resources in our Resource Group should share the same lifecycle which enables us to deploy, update, and delete them together. After talking about Resource Group, we were then introduced a tool called Azure Resource Manager. Azure Resource Manager enables us to work with the resources in our solution as a group. We can then deploy, update, or delete all the resources for our solution in a single, coordinated operation using a JSON file known as Resource Manager Template which defines resources to be deployed to a resource group. [caption id=“attachment_5632” align=“aligncenter” width=“1920”] Azure Resource Group wizard on Visual Studio 2015 helps us designing our resource group.[/caption] With the Resource Manager Template, we can then make use of it to deploy our resources consistently and repeatedly to Microsoft Azure. This leads to the second concept known as Infrastructure as Code, a process of managing and provisioning computing infrastructure with some declarative approach while setting their configuration using definition files instead of traditional interactive configuration tools. The reason why IaC was mentioned is because it is a key attribute in DevOps which takes the confusion and error-prone aspect of manual processes and make it more efficient and productive.

Azure Resource Group wizard on Visual Studio 2015 helps us designing our resource group.[/caption] With the Resource Manager Template, we can then make use of it to deploy our resources consistently and repeatedly to Microsoft Azure. This leads to the second concept known as Infrastructure as Code, a process of managing and provisioning computing infrastructure with some declarative approach while setting their configuration using definition files instead of traditional interactive configuration tools. The reason why IaC was mentioned is because it is a key attribute in DevOps which takes the confusion and error-prone aspect of manual processes and make it more efficient and productive.

What I’ve shared about are some basic but yet important topics for me as a developer in a non-tech/software company. There are some other advanced topics such as DDOS defense system in Azure, data segregation, Azure Active Directory B2C, Azure Stack, subscription model in Azure API Management, Lambda Architecture, In-Memory OLTP, DevOps Pipeline, Build Agent, Visual Studio Team Service, etc. If you are interested to learn more about all these yourself, please visit the Singapore Azure Events web page to find out more. There are still many such activities and talks coming up soon in Singapore!

What I’ve shared about are some basic but yet important topics for me as a developer in a non-tech/software company. There are some other advanced topics such as DDOS defense system in Azure, data segregation, Azure Active Directory B2C, Azure Stack, subscription model in Azure API Management, Lambda Architecture, In-Memory OLTP, DevOps Pipeline, Build Agent, Visual Studio Team Service, etc. If you are interested to learn more about all these yourself, please visit the Singapore Azure Events web page to find out more. There are still many such activities and talks coming up soon in Singapore!